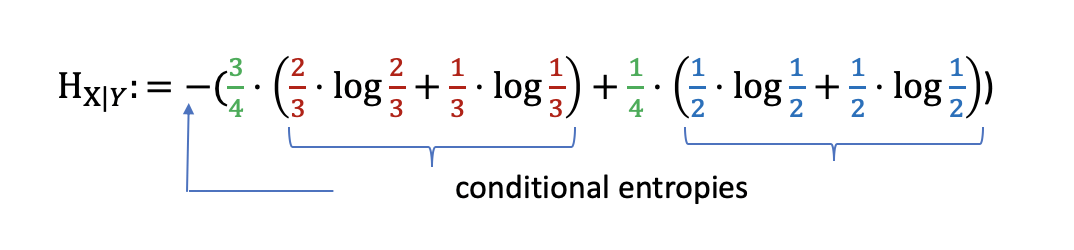

The joint distribution of the two random variables is given in Table 3.2. Each of them takes values over a five-letter alphabet consisting of the symbols, a, b, c, d, e. Proceedings of the Eleventh International Conference on. As a result, I defined my conditional differential entropy as. Reference (2) discusses similar problems. h ( X Y) f ( x, y) log ( f ( x y)) d x d y. Let us now suppose that Alice possesses random variable X and Bob. And in lemma 2.1.2: Hb(X) (logb a)Ha(X) Proof: logbp logba\ logap. Approximate inference using conditional entropy decompositions. In (1) the conditional differential entropy is defined as. We give a proof of this statement after developing relative entropy. Meanwhile, we also provide a certain subadditivity of the geometric Rényi relative entropy.īelavkin–Staszewski relative entropy classical-quantum setting conditional entropy geometric Rényi relative entropy mutual information. Entropy, joint entropy, and conditional entropy of X and Y. If the base of the logarithm is b, we denote the entropy as Hb(X).If the base of the logarithm is e, the entropy is measured in nats.Unless otherwise specified, we will take all logarithms to base 2, and hence all the entropies will be measured in bits. In mathematical statistics, the KullbackLeibler divergence (also called relative entropy and I-divergence), denoted (), is a type of statistical distance: a measure of how one probability distribution P is different from a second, reference probability distribution Q. Finally, the subadditivity of the Belavkin-Staszewski relative entropy is established, i.e., the Belavkin-Staszewski relative entropy of a joint system is less than the sum of that of its corresponding subsystems with the help of some multiplicative and additive factors. In particular, we show the weak concavity of the Belavkin-Staszewski conditional entropy and obtain the chain rule for the Belavkin-Staszewski mutual information.

Next, their basic properties are investigated, especially in classical-quantum settings. In this paper, two new conditional entropy terms and four new mutual information terms are first defined by replacing quantum relative entropy with Belavkin-Staszewski relative entropy. In this paper we show that the Conditional Entropy of nearby orbits may be a useful tool to explore the phase space associated to a given Hamiltonian. A close relationship between the PAIF and the conditional entropy test (van der Heyden et al., 1998) is established by re-writing both in terms of joint. Belavkin-Staszewski relative entropy can naturally characterize the effects of the possible noncommutativity of quantum states. An important concept of Bayes theorem named Bayesian method is used to calculate conditional probability in Machine Learning application that includes.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed